In a recent discussion of ranking systems as used to seed tournaments, I realized I thought that the best way to evaluate a ranking system is through its predictive power. I therefore decided to test a set of ranking systems to see which one was best at predicting tournament outcomes.

The ranking systems I have come across are:

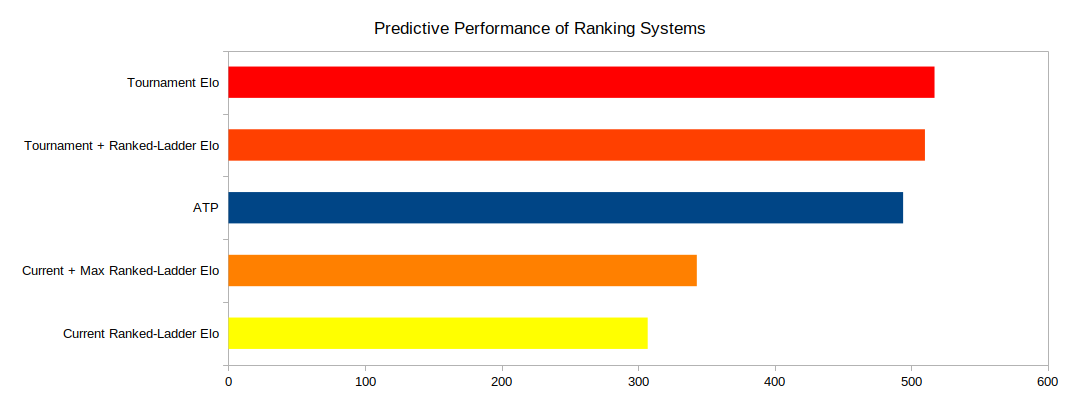

We see that the tournament elo, mix of tournament and ranked-ladder elo, and ATP all performed best and are within a couple percent of each other. Max + current ranked-ladder was about one-third worse than the average of the top three, and current ranked-ladder elo was about 40% worse.

The way I tested the systems was to have each of them predict how a series of single-elimination tournaments should play out given the player rankings at the time, then compare these predictions to what actually happened.

For tournaments, I chose all the S- and A-Tier 1v1 tournaments from 2021 and 2022 that finished with a standard single-elimination bracket. I used the NCAA.com system for evaluating March Madness brackets to score each prediction. The final scores in the graph are the cumulative totals for all the tournaments.

As the results are based on only twenty tournaments over the course of one year, I have no idea how robust they are. But I hope they provide some assistance to tournament organizers when deciding how to seed tournaments and to the rest of us when second-guessing them.

The ranking systems I have come across are:

- Current ranked-ladder elo: used by Wandering Warriors Cup, discussion of which inspired this post

- Average of max ranked-ladder elo and current ranked-ladder elo: used by Master of Socotra 2 and Return of the Clans

- Tournament elo: maintained by the fine people at aoe-elo, who calculate elo based on games in tournaments rather than from the ranked ladder

- Combination of tournament and ranked-ladder elo: used for World Rumble, one-half tournament elo, one quarter max ranked-ladder elo, one quarter current ranked-ladder elo

- ATP: a non-elo alternative based on the system used for professional tennis, where points are awarded based on where a player places in a tournament and the prestige of the tournament. @robo developed the prestige algorithm and maintains the spreadsheet with all the data and calculations.

We see that the tournament elo, mix of tournament and ranked-ladder elo, and ATP all performed best and are within a couple percent of each other. Max + current ranked-ladder was about one-third worse than the average of the top three, and current ranked-ladder elo was about 40% worse.

The way I tested the systems was to have each of them predict how a series of single-elimination tournaments should play out given the player rankings at the time, then compare these predictions to what actually happened.

For tournaments, I chose all the S- and A-Tier 1v1 tournaments from 2021 and 2022 that finished with a standard single-elimination bracket. I used the NCAA.com system for evaluating March Madness brackets to score each prediction. The final scores in the graph are the cumulative totals for all the tournaments.

I ran the same tests with many different parameters: just S-Tier, just A-Tier, only Random Map, just the last three rounds of the tournaments, and using an alternative March Madness scoring system. These other tests make the story more complicated without changing its fundamentals. All the variations had the same three at the top and very close together, and most had the same order for the top three. The average of max + current ranked-ladder elo was always fourth and current ranked-ladder elo always came in last. I therefore chose to ignore these tests for the main discussion, but I wanted to enter this caveat here for those who like caveats.

As the results are based on only twenty tournaments over the course of one year, I have no idea how robust they are. But I hope they provide some assistance to tournament organizers when deciding how to seed tournaments and to the rest of us when second-guessing them.

RacerFXX

RacerFXX felix.feroc

felix.feroc nimanoe

nimanoe siestes

siestes robo

robo

TheCapybara

TheCapybara

eC_Gurke

eC_Gurke

Hera

Hera  _Barles_

_Barles_  Villese

Villese

HGB_AOE

HGB_AOE  [aM]_MbL40C_

[aM]_MbL40C_  CDUB.dogao

CDUB.dogao  __BadBoy__

__BadBoy__  Muhammed__

Muhammed__